Robert Claus

March 10, 2026

A Guide to Data Context in the Age of AI

Written by Robert Claus, Director of Engineering at Plotly

A great deal has been written on the need to provide LLMs context for them to make good decisions.

Specific to the field of data analytics, LLMs need context about data to perform highly reliable data analysis tasks. However, it can be very difficult to define what “context” will provide sufficient results for specific data. For many problems simple summary statistics are sufficient; for others sophisticated documentation seems to be necessary.

The cost of creating and maintaining documentation can be very high, so there is good reason to understand the problem and solution space.

What is data context?

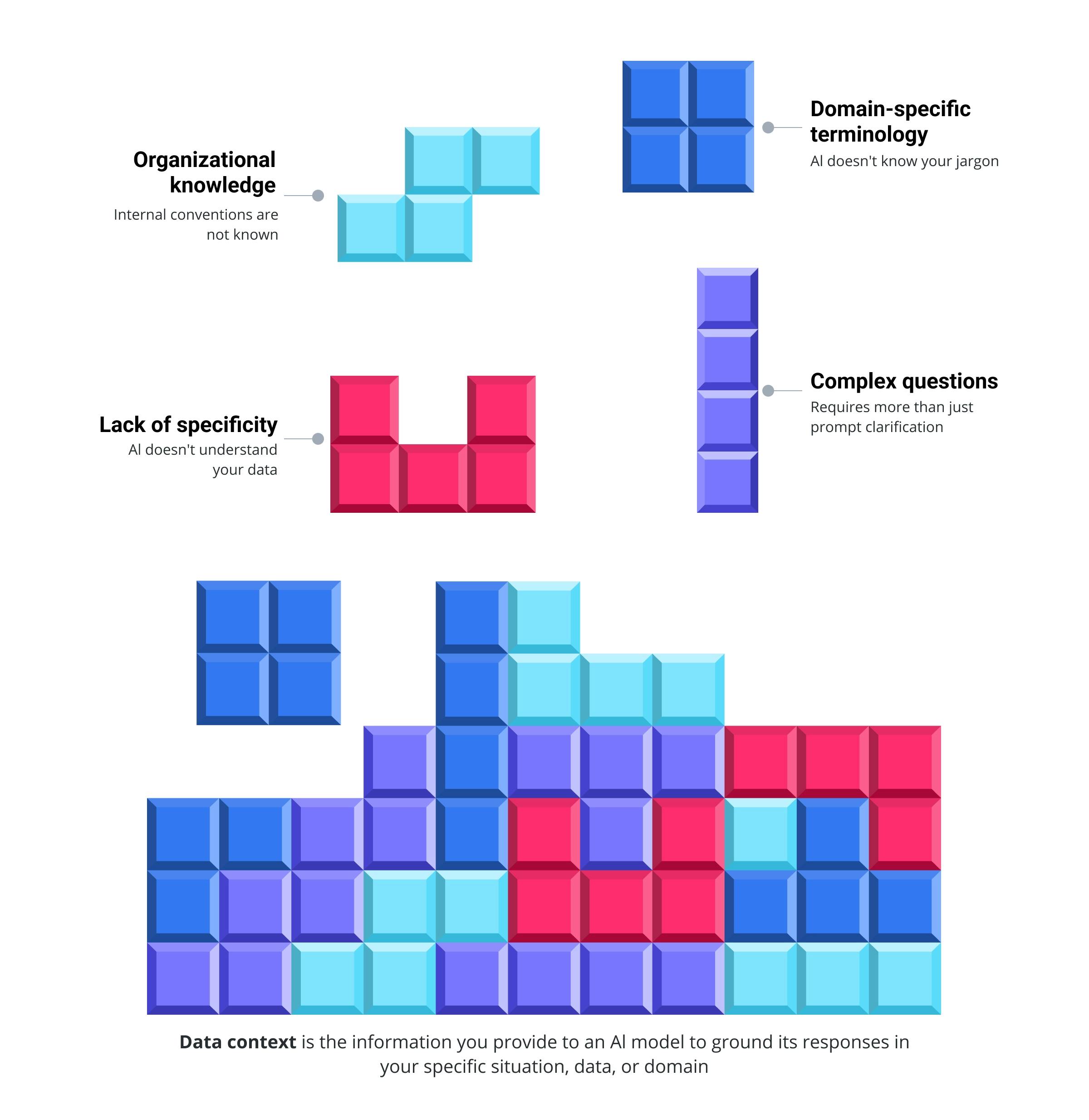

Context is the information you provide to an AI model to ground its responses in your specific situation, data, or domain, beyond what it already knows from training. In analytics, data context is the information that aligns an AI system's understanding with your specific analytical needs and dataset characteristics.

Some of the challenges solved by having data context to feed into LLMs

Data context can range from basic data summaries that describe column types and distributions to more sophisticated organizational knowledge like internal naming conventions and domain-specific terminology.

Without data context, a model relies on general knowledge, which works for common tasks but breaks down when applied to organization-specific data or terminology.

The amount of context required scales with the complexity of your question. Sometimes clarifying your prompt is enough, other times you need to supply full documents or dataset summaries to get a reliable answer.

Why is data context necessary for good analysis?

Data context matters because it aligns AI's understanding with your specific analytical needs and dataset characteristics, preventing generic guesses that look plausible but fail on real data.

Most AI tools will either ask you if they don't know something or take a best guess. Either way, they likely have some way for you to provide more context to clarify your request further.

In most cases, how you provide data context is situational since providing more context requires more work.

Four types of data context for LLMs

Understanding how to provide context means knowing which type to use for your specific analytical needs. These four types of data context below represent a spectrum from simple and generalizable to complex and organization-specific, each with distinct tradeoffs between integration effort and analytical precision.

Pre-trained knowledge

The simplest type of data context an LLM can use is knowledge it was already trained on.

For large frontier models, this context includes a lot of industry jargon that it will recognize right out of the box. For example, when building Plotly Studio we did not have to explain to the model what a "Violin Plot" was because it already knew.

However, this type of background knowledge is also somewhat dangerous. It often works amazingly for examples but then falls short when you try it on actual data specific to your organization. We've seen LLMs literally guess column names successfully based on common examples, which obviously doesn't work when it comes to real data.

Data summaries

For data analytics, a common next level of context is to provide a summary of the specific data you're looking at. That way, you and the LLM are grounded in talking about the same dataset. The Python library pandas offers a simple way to compute summary statistics for each column using the dataframe.describe() function. Add a few example rows and the model will have a very good sense of the data's structure.

In Plotly Studio we found that this data summary approach works extremely well for data visualization if the user already knows what data they are looking for. In most cases, the LLM is more than capable of inferring the meaning of different columns once it has that level of detail.

However, we found it was incredibly impractical to support searching for data this way. Often a data warehouse had thousands of tables, or the data itself needed to be retrieved in a non-tabular format.

Agentic exploration

In cases where data summaries fall short, we found that agentic exploration of the data was even more effective than providing an upfront summary. The tools we allow these agents to use are quite simple: writing and executing code snippets. For this type of context, the AI agent can iteratively build its own context from your data sources to understand your question better.

This approach uses a lot more LLM tokens since the agent may need to iterate multiple times, but also makes the system much more capable across different data requirements.

In general, we have found this approach to be incredibly effective. However, this simple approach only really helps the system explore the data itself, not understand internal terminology or existing norms within an organization.

Organizational context

This final level of understanding is generally the highest lift to integrate because it requires resources outside of the data itself. Often it requires:

- Extensive RAG indexing of internal documents

- Manually curated semantic layers

- Problem-specific documentation

While having this type of data context is feasible for large organizations, that level of coordination is often too high lift to warrant the integration effort across a company. Moreover, it introduces major governance questions around access to these non-data resources.

In our experience using Plotly Studio, the effort to reach this level of integration must be weighed carefully against the effort of guiding or validating individual results.

Context is only as good as how you use it

There are a wide range of LLM driven solutions available to data analysts today. Those solutions range from integrated tools such as one-off text-to-SQL in a database browser all the way to swarms of coding agents that can run hours without human interaction. Which of these tools you use can dramatically affect how well the context provided is used by the models.

On the simple end of the data analysis spectrum are one shot text-to-SQL tools. While modern tools use LLMs and are quite powerful, this type of tooling dates back to SQL’s sentence-like structure. The main limitation of any SQL writing is that it is limited to a subset of the “Analyze the data” stage of a data science project. Even with perfect data context and the right generated SQL, the form factor of these tools is limited to a small part of the greater journey.

A slightly more sophisticated approach is using an AI chatbot directly. These have the advantage of synthesizing concepts and summarizing data, so they can be leveraged for various parts of the data analysis process. However, they run into the opposite problem of integrated text-to-SQL tools in that generic chatbots generally cannot connect to your data directly. This leads to tedious workflows copying queries and data back and forth. Moreover, since simple AI chatbots pass data directly to the LLM there is a limit to how much data they can process - much less massive amounts of contextual information.

Luckily, many companies are evolving their AI chatbots to actually function as Coding Agents instead. Providing them with tools to execute code and approach requests iteratively instead of with a single response. These agentic chatbots can generally tackle full data science analysis from finding data to presenting results. Moreover, since they can write code the results they provide can be sophisticated reports with visuals rather than simple textual summaries.

Putting data context to work for better AI-assisted data analytics

Most analytics problems can be broken down into five distinct steps. There is a lot of iteration between these steps, but the work done in each one typically involves slightly different skills or technologies.

- Define the question

- Find the data you need

- Analyze the data

- Interpret the results

- Take action

For the most part when we hear about AI data analysis, it is focused on the most technical steps — preparing data, analyzing the data, and explaining the results. This leaves room for humans to define the questions and take appropriate actions.

Where AI excels and where humans still lead

Keeping AI focused on preparation, analysis, and presentation while humans handle questions and actions makes AI deployable without requiring deep organizational integration.

For example, if you wanted AI to take direct action it would need to be integrated with your entire company somehow to allow arbitrary actions. This deep integration alone would be significantly more complex than the AI system itself. Alternatively, if you scope the system to just a handful of corrective actions without deep integration you lose much of the opportunity for meaningful results.

Similarly, defining the question for the data analysis can require a lot of subtle cross-functional context. We've seen in our Plotly Studio work that LLMs can guess at interesting trends very effectively due to their broad domain knowledge, but deeper insights require company-specific context. Hence it makes sense for humans to provide the LLMs with specific questions.

On the other hand, our experience with Plotly Studio has taught us that data preparation, analysis, and presentation are very possible using LLMs. The combination of strong coding abilities and robust summarization skills make LLMs particularly well suited for data analytics tasks.

Moreover, in the last year we've seen agentic patterns with iterative tool calling make search tasks increasingly feasible. This means LLM-based tools can now effectively search for relevant data without needing to index it into a RAG database or possess excessive annotations.

With these abilities, the question when using agentic analytics tools is now whether the agent answered the question you meant, or if it misinterpreted your question. Like working with a new co-worker, this means building common context and terminology is critical.

How to approach AI-assisted data analysis

With these learnings in mind, we can make a simple but strong recommendation on how to approach data analysis with AI tools:

Start with powerful LLM coding tools and add data context as needed.

Here's how to implement this approach:

- Choose agentic coding tools as your foundation: Agentic coding tools provide the benefit of iterative tool calling and much more meaningful results from analysis. These tools can accomplish a lot with limited context since they can search and ask follow-up questions.

- Begin with minimal context: Start your analysis with basic information about what you're looking for. The agent can explore and ask follow-up questions to build understanding iteratively.

- Add targeted data context to guide the analysis: Supplement the agent's exploration with moderate amounts of data context to set it on the right path. This might include: data summaries for the specific tables you're analyzing, clarification of domain-specific terminology, or sample queries or examples of the type of output you expect

- Evaluate the cost-benefit of deeper semantic layers: The effort to curate semantic information is often most important when dealing with hundreds or thousands of concepts that need to be disambiguated for the model. Unfortunately, in that situation the cost of preparing that data can often be very high, meaning it should only be taken on if the return on investment is clear.

For a handful of analyses on such complex data, it's often better to hand-hold the LLM on individual analyses rather than curating context without a clear path to reuse.

We built Plotly Studio based on these learnings about data context and agentic exploration. If you want to see how this approach works with your own data, try Plotly Studio free.

About the author

Robert Claus is a software developer with over a decade of experience working with data. In his current role as Director of Engineering at Plotly, he leads engineering teams building cutting edge AI tools for data scientists. Before Plotly, he was CTO at the Madison startup DataChat where he led the team in building a natural language data processing platform. Robert also has experience in the healthcare data space, having worked at Epic Systems with international data exchange standards. His recent work has focused largely on applying LLM code generation techniques to data analytics.